The -nl option adds newlines between files. This will create a new file on your local directory that contains all the files from a directory and concatenates all them together. You can also bulk upload a chunk of files via: hdfs dfs -put *.txt /test1/ The reason I want to do this so I can show you a very interesting command called getmerge.Ĭoncatenate all the files into a directory into a single file Usage: hdfs dfs -getmerge Sometimes you want to test a user's permissions and want to quickly do a write. This works the same as Linux Touch command.

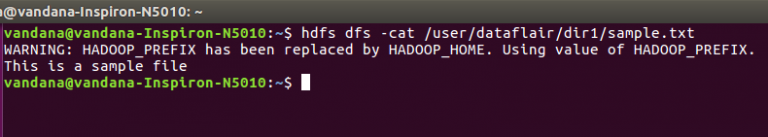

Many tools including Hive, Spark history and BI tools will create directories and files as logs or for indexing.Ĭreate an empty file in an HDFS Directory Usage: hadoop fs -touchz. Often you won't realize how many files and directories you actually have in HDFS. R is another great one to drill into subdirectories. h shows in human readible sizes, recommended. You can choose any path from the root down, just like regular Linux file system. To List All the Files in the HDFS Root Directory Usage: hdfs dfs -ls ĭrwxrwxrwx - yarn hadoop 0 16:05 /app-logs The first command I type every single day is to get a list of directories from the root. The following are always helpful and usually hard or slower to do in a graphical interface. The one universal and fastest way to check things is with the shell or CLI. There is a detailed list of every command and option for each version of Hadoop.Įvery day I am looking at different Hadoop clusters of various sizes and there will be various tools for interacting while HDFS files either via web, UI, tools, IDEs, SQL and more. I also recommend installing all the clients it recommends including Pig and Hive. The easiest way to install is onto a jump box using Ambari to install the Hadoop client. The HDFS client can be installed on Linux, Windows, and Macintosh and be utilized to access your remote or local Hadoop clusters. This guide is for Hadoop 2.7.3 and newer including HDP 2.5. This replaces the old Hadoop fs in the newer Hadoop. This is a runner that runs other commands including dfs. The first way most people interact with HDFS is via the command line tool called hdfs. There are many ways to interact with HDFS including Ambari Views, HDFS Web UI, WebHDFS and the command line. It uses the -skipTrash flag to force the immediate deletion of the files.The Hadoop File System is a distributed file system that is the heart of the storage for Hadoop.

The following example uses the HDFS rmr command from the Linux command line to delete the directories left behind in the HDFS storage location directory /user/dbamin. See Apache's File System Shell Guide for more information.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed